The Problem With Smart Robots That Can't Touch Anything

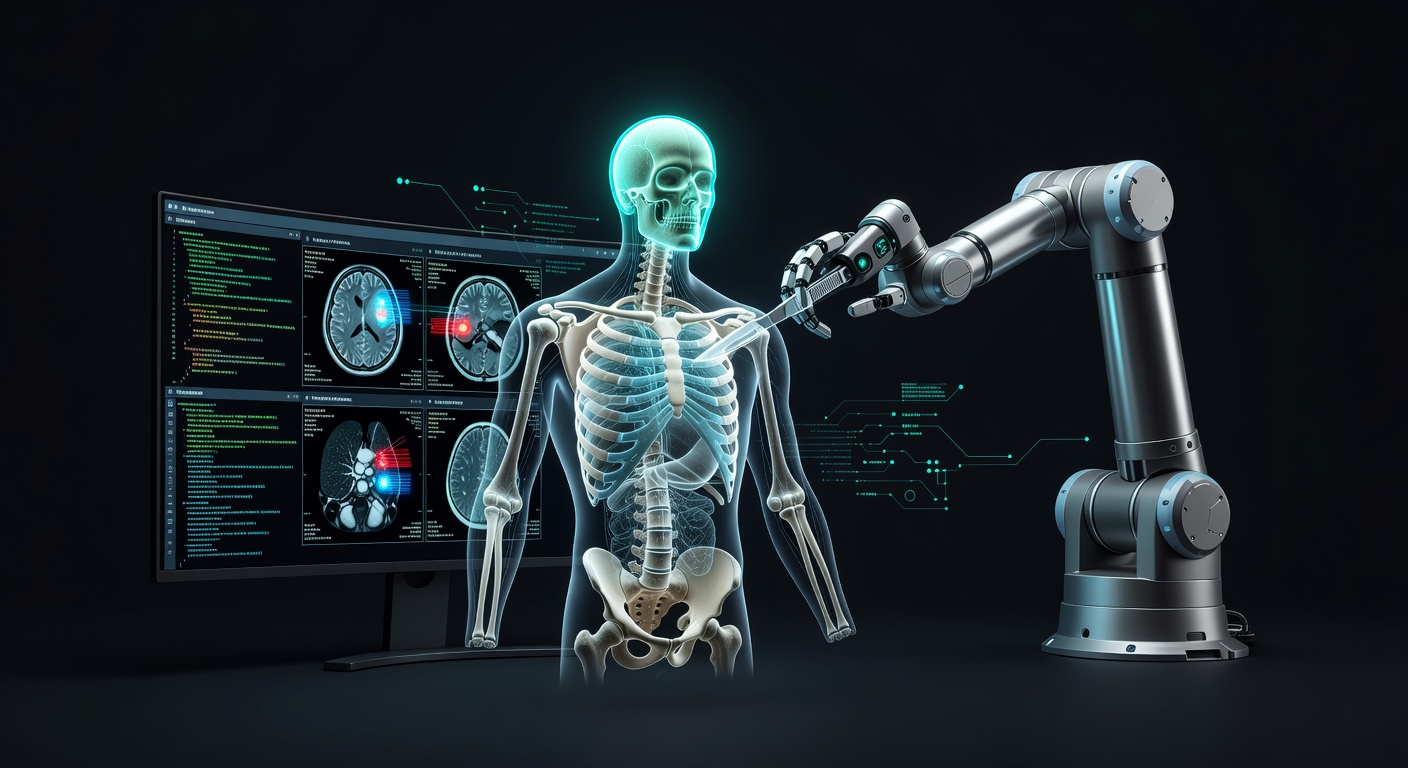

Here's something that's always bugged me about medical AI: we've built incredibly sophisticated systems that can spot cancer in X-rays better than radiologists, but ask them to hand you a scalpel and they're completely useless.

Think about it—most healthcare AI today is basically a really advanced pattern-matching system. It looks at images, reads data, and makes predictions. But medicine isn't just about seeing problems; it's about fixing them. And fixing things requires robots that can move, touch, apply pressure, and react to what they feel in real-time.

Enter the Game-Changer: Open-H-Embodiment

This is where the new Open-H-Embodiment dataset comes in, and honestly, I'm pretty excited about it. It's the first major attempt to teach robots how to actually do medical stuff, not just analyze it.

What makes this special? Instead of just feeding robots thousands of medical images, this dataset includes:

- Real robot movements during actual procedures

- Force feedback data (how hard to push, when to pull back)

- Synchronized vision so robots can see and feel simultaneously

- Cross-platform compatibility so different robot types can learn from each other

Why This Matters More Than You Think

The collaboration behind this is pretty impressive too—35 organizations from Johns Hopkins to NVIDIA all working together. That's not something you see every day in the competitive world of medical technology.

But here's what really gets me excited: we're finally moving beyond the "look but don't touch" phase of medical AI. Imagine surgical robots that can learn techniques from thousands of procedures, or ultrasound machines that can automatically adjust pressure based on patient comfort and image quality.

The Real-World Impact

This isn't just about making cooler robots (though that's fun too). We're talking about:

- Democratizing expertise: A robot trained on the best surgeons' techniques could assist in underserved areas

- Consistency: No more variation based on how tired the doctor is

- Learning from mistakes: Every procedure becomes a learning opportunity for the entire system

The Road Ahead

Of course, we're still in the early days. Teaching a robot to perform surgery is infinitely more complex than teaching it to play chess. But having a proper dataset is like giving researchers a common language to work with.

What I find most promising is the open, collaborative approach. Instead of every company building their own isolated system, we're seeing genuine cooperation toward a shared goal. That's exactly what we need to tackle challenges this complex.

The future where your surgeon has an AI assistant that never gets tired, never has a bad day, and has learned from millions of procedures? It's starting to look less like science fiction and more like an inevitable next step.