This AI Can Watch Multiple Players in Minecraft at Once — And It's Kind of Mind-Blowing

Have you ever wondered what it would be like if an AI could see through the eyes of multiple players in a video game at the same time? Well, wonder no more — because researchers have just made it happen, and it's pretty incredible.

The Problem Nobody Was Talking About

Here's something I never really thought about until reading about this research: most AI systems that try to predict what happens next in video games can only handle one player's perspective at a time. It's like they have tunnel vision.

But think about it — in the real world, if you're playing catch with a friend and you throw a ball, that action doesn't just affect what you see. Your friend sees the ball coming toward them, any bystanders see it flying through the air, and everyone's view needs to make sense together. This is called "multi-agent consistency," and it turns out it's really hard for AI to get right.

Enter Solaris: The Multi-Perspective AI

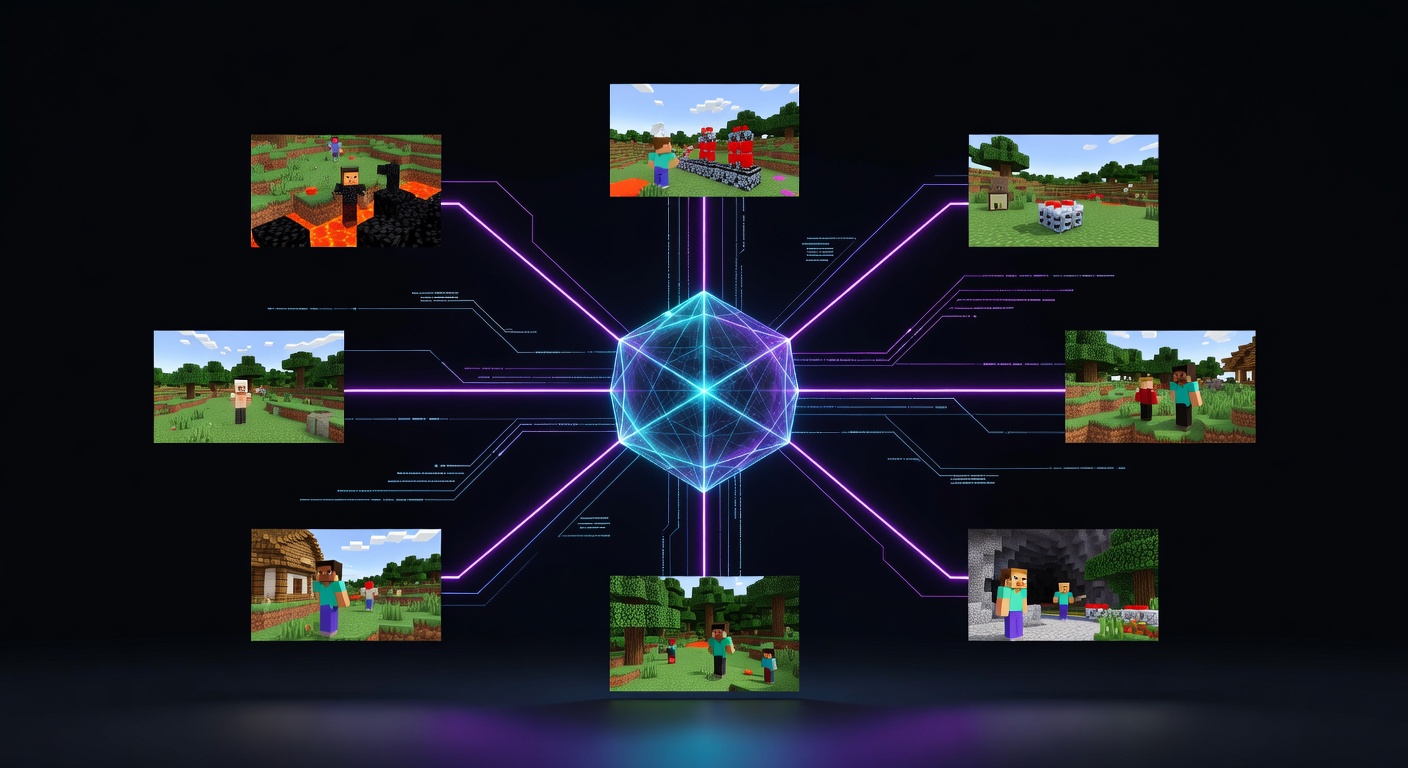

A team of researchers decided to tackle this challenge by creating something called Solaris — an AI system that can simulate multiple players' viewpoints in Minecraft simultaneously. And honestly, the results are kind of magical.

What makes this so impressive? Imagine two players standing across from each other in Minecraft. Player A places a block. Solaris doesn't just show that block appearing in Player A's view — it also correctly shows that same block appearing in Player B's view, from their completely different angle, with proper lighting, shadows, and occlusion. Everything stays perfectly consistent across all viewpoints.

Why Minecraft Makes Perfect Sense

The researchers chose Minecraft as their testing ground, and it's actually brilliant. Here's why:

It's incredibly complex visually. Unlike simpler 2D games, Minecraft's 3D world means the AI has to handle perspective changes, objects going behind other objects, and spatial reasoning that would make your head spin.

Things change constantly. Players are always building, destroying, moving around. The AI needs to track all these changes and show them correctly from every angle.

It's unpredictable. Random mobs spawn, weather changes, and environmental events happen. The AI has to distinguish between changes caused by players versus random game events.

The Secret Sauce: A Massive Data Collection System

Here's where things get really interesting. To train an AI like this, you need enormous amounts of data showing what multiplayer gameplay actually looks like. But here's the catch — there wasn't any good system for collecting this kind of data automatically.

So the researchers built their own called SolarisEngine. Picture this: they created AI bots that play Minecraft together, mining, building, fighting, and exploring. These bots generated over 12 million frames of multiplayer footage — that's hours and hours of synchronized multi-player gameplay from multiple perspectives.

The best part? This system can run continuously, generating new training data 24/7. It's like having a bunch of AI players grinding away in Minecraft servers just to create training material for other AI systems.

The Technical Magic Behind the Curtain

Without getting too deep into the weeds, Solaris uses something called a "video diffusion model." Think of it like this: the AI starts with noise and gradually refines it into coherent video frames, but it does this while keeping track of multiple viewpoints simultaneously.

They also implemented something called "Checkpointed Self Forcing" — essentially a memory-efficient way for the AI to learn from longer sequences without running out of computational resources. It's like teaching the AI to remember important moments without keeping everything in its head at once.

Why This Actually Matters

You might be thinking, "Cool, but why should I care about AI playing Minecraft?" Fair question! But this technology has implications far beyond gaming.

Robotics: Imagine robots that need to coordinate tasks while maintaining awareness of each other's perspectives and actions.

Autonomous vehicles: Self-driving cars need to understand how their actions appear to other vehicles and pedestrians.

Virtual training: This could revolutionize how we create simulated environments for training everything from emergency responders to surgeons.

The Bigger Picture

What excites me most about Solaris isn't just that it works — it's that the researchers are open-sourcing everything. The data collection system, the models, the evaluation framework — they're giving it all away for free.

This feels like one of those pivotal moments where a new research direction gets unlocked. We're moving from AI that can only see through one set of eyes to AI that can maintain a coherent understanding of multi-agent environments.

Sure, we're still talking about Minecraft blocks and pixelated environments. But every revolutionary AI system starts somewhere simple before it changes the world. And something tells me we're going to look back at Solaris as one of those important first steps.

The future of AI isn't just about making smarter individual agents — it's about creating AI that can understand and simulate the complex, multi-perspective reality we all live in. And that future just got a little bit closer.

Source: https://arxiv.org/pdf/2602.22208