The New School Yard Bully Has an Algorithm

We've all been there – that one teacher who made your life miserable, or maybe just gave you a pop quiz on a Friday. But what used to be whispered complaints in hallways has evolved into something far more sinister and sophisticated.

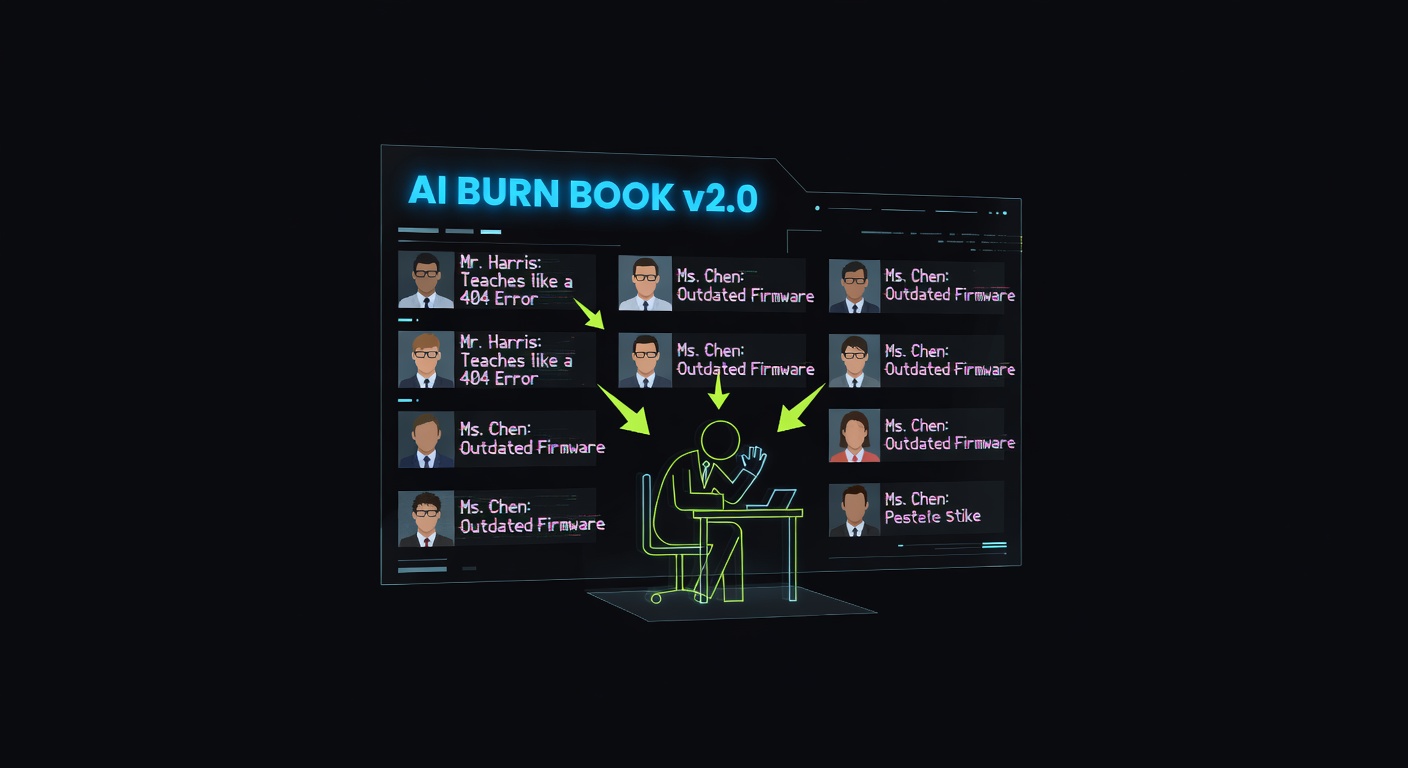

Today's students aren't just venting to their friends anymore. They're leveraging artificial intelligence to create entire websites dedicated to mocking their teachers. Think of it as the digital evolution of the classic "Burn Book" from Mean Girls, except now it's powered by algorithms and can reach a global audience.

How It Actually Works

Here's the scary part – creating these "slander pages" is becoming ridiculously easy. Students can use AI writing tools to generate harsh, sometimes fabricated stories about their educators. They can create fake social media profiles, generate convincing but false reviews, and even use AI image tools to create embarrassing memes or doctored photos.

The anonymity factor makes it even worse. Unlike traditional bullying where you at least knew who your tormentor was, these AI-powered attacks can come from anywhere, making it nearly impossible for teachers to defend themselves or even know who's behind the harassment.

Why This Matters More Than You Think

Look, I get it – we were all teenagers once, and some teachers really do suck. But here's the thing that worries me: we're witnessing the democratization of sophisticated harassment tools. What used to require technical skills or at least some effort now takes just a few clicks and prompts to an AI chatbot.

This isn't just about hurt feelings (though that's bad enough). Teachers are reporting real impacts on their mental health, job prospects, and even personal relationships. When false information spreads online, it can stick around forever, haunting someone's digital footprint for years.

The Bigger Picture: AI Ethics in Teen Hands

This situation highlights a massive blind spot in how we're rolling out AI technology. We're essentially giving teenagers access to incredibly powerful tools without really teaching them about the ethical implications or potential consequences.

It's like handing a loaded weapon to someone without teaching them about gun safety – except in this case, the weapon is algorithmic and the damage can be just as real and long-lasting.

What Can We Actually Do About It?

The solution isn't to ban AI (good luck with that), but rather to get serious about digital literacy education. Students need to understand that just because they can use AI to create harmful content doesn't mean they should.

Schools also need better reporting mechanisms and clearer consequences for this type of behavior. And let's be honest – parents need to have some uncomfortable conversations with their kids about empathy and the real people behind the screen names.

My Take: Where Do We Go From Here?

As someone who's fascinated by AI's potential, this trend genuinely breaks my heart. We're watching an incredible technology get weaponized for petty revenge and genuine cruelty. It's like using a Ferrari to run over mailboxes – such a waste of amazing potential.

The genie's out of the bottle when it comes to AI accessibility, and that's probably a good thing overall. But we desperately need to catch up on the human side of the equation – teaching empathy, digital responsibility, and the understanding that there are real people with real feelings on the other end of these AI-generated attacks.

What do you think? Have you seen this happening in schools around you? More importantly, how do we teach the next generation to use these powerful tools responsibly?

Source: https://www.wired.com/story/teens-are-using-ai-fueled-slander-pages-to-mock-their-teachers