When AI Breaks the Social Contract: The Chardet Drama That Has Open Source Developers Fighting

Sometimes the biggest controversies in tech start with the most mundane-sounding changes. Last week, a Python library called chardet got an update that's got the open source world in an uproar — and honestly, it's one of the most fascinating ethical dilemmas I've seen in years.

What Actually Happened

Here's the story: Dan Blanchard maintains chardet, a Python library that figures out what character encoding text files are using. It's incredibly popular — we're talking about 130 million downloads per month. That's a lot of websites, apps, and systems depending on this little piece of code.

Blanchard decided the library needed a major overhaul, so he did something pretty wild. Instead of gradually improving the existing code, he fed the API specification and test suite to Anthropic's Claude AI and asked it to rewrite the entire library from scratch. The result? Version 7.0 is 48 times faster and uses multiple CPU cores.

But here's where it gets spicy: he also changed the license from LGPL (a copyleft license) to MIT (a permissive license). His reasoning? Since the AI created entirely new code that shares less than 1.3% similarity with the original, this is a completely independent work, so he's not bound by the original license terms.

The original author, Mark Pilgrim, was not having it. He opened a GitHub issue arguing that you can't just AI-wash away your license obligations like that.

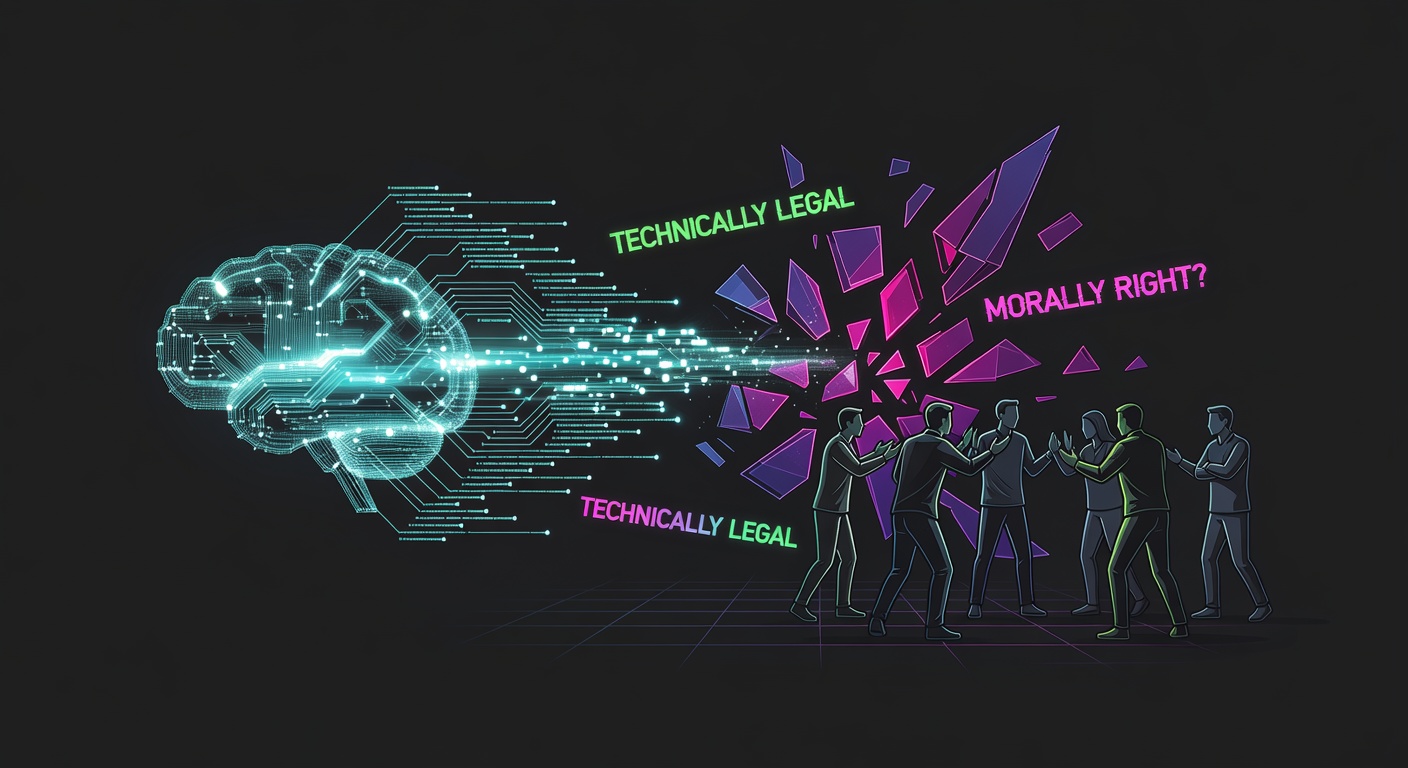

The Fundamental Question: Legal vs. Right

This drama perfectly illustrates something I think about a lot: just because you can do something doesn't mean you should.

Two big names in open source — Armin Ronacher (creator of Flask) and Salvatore Sanfilippo (creator of Redis) — both defended Blanchard's move. Their arguments basically boil down to: "It's legally permissible, so it's fine."

But that's where I think they're missing the point entirely.

Why This Feels Like Betrayal

Let me explain why this situation feels so wrong to many developers, even if it might be technically legal.

When someone releases code under the LGPL, they're making a deal with the community: "You can use this, modify it, build on it — but if you share your improvements, you have to share them under the same terms." It's like a potluck where everyone agrees to bring a dish and share recipes.

Over 12 years, dozens of developers contributed to chardet under this understanding. They spent their time and expertise making it better, trusting that future improvements would remain available to everyone under the same terms.

Now, suddenly, that protection is gone. Companies can take chardet 7.0, improve it, and keep those improvements private. The social contract that encouraged all those contributions has been unilaterally dissolved.

The Direction Matters

One of the defenses I keep seeing compares this to when the GNU project reimplemented UNIX utilities. "That was legal, and we celebrated it, so this should be fine too!"

But that comparison completely misses the direction of change. When GNU reimplemented UNIX, they were taking proprietary software and making it free. The arrow pointed toward more openness, more sharing, more freedom for users.

This chardet situation? The arrow points the other way. We're taking something that was protected for the commons and removing that protection. It's like turning a public park into private property — technically legal if you follow the right procedures, but fundamentally different in spirit.

The Real Stakes

This isn't really about one Python library. It's about what happens when AI makes it trivially easy to rewrite any piece of software while dodging license obligations.

If this approach becomes normalized, we could see a systematic erosion of copyleft protections across the open source ecosystem. Why contribute to a GPL project when a competitor can just AI-rewrite it under MIT next month?

The technical barriers that once made license circumvention difficult are disappearing. The question isn't whether this will happen more often — it's how the community will respond.

What This Means for the Future

I think we're heading toward a fork in the road for open source. One path leads to a world where permissive licenses dominate because copyleft becomes unenforceable. The other leads to new forms of copyleft that account for AI-assisted development.

Some people are already working on this. There are proposals for "training copyleft" licenses that would cover AI training data, and "specification copyleft" that would protect the API and test suites that AI uses as input.

The chardet controversy is just the beginning. We're going to see more cases like this, and each time we'll have to decide: Do we prioritize legal technicalities, or do we try to preserve the collaborative spirit that made open source work in the first place?

My Take

Look, I get why someone would want to escape copyleft restrictions. They can be inconvenient if you're trying to build a commercial product. But convenience isn't the same as ethics.

When you benefit from years of community contributions, I think you have a moral obligation to respect the terms under which those contributions were made. Using AI as a license-laundering tool feels like a violation of that trust, even if it's technically permissible.

The open source community has always been held together by social norms as much as legal frameworks. If we let those norms erode in favor of "whatever's technically legal," we might find ourselves with a very different kind of software ecosystem — one that's more extractive and less collaborative.

That's not the future I want to build.

Source: https://writings.hongminhee.org/2026/03/legal-vs-legitimate