The AI Trust Fall That Went Wrong

Well, this is awkward. The Department of Justice has essentially told Anthropic – you know, the company behind Claude AI – that they're not military material. It's like being told you can't join the cool kids' table, except the cool kids' table involves weapons systems and national defense.

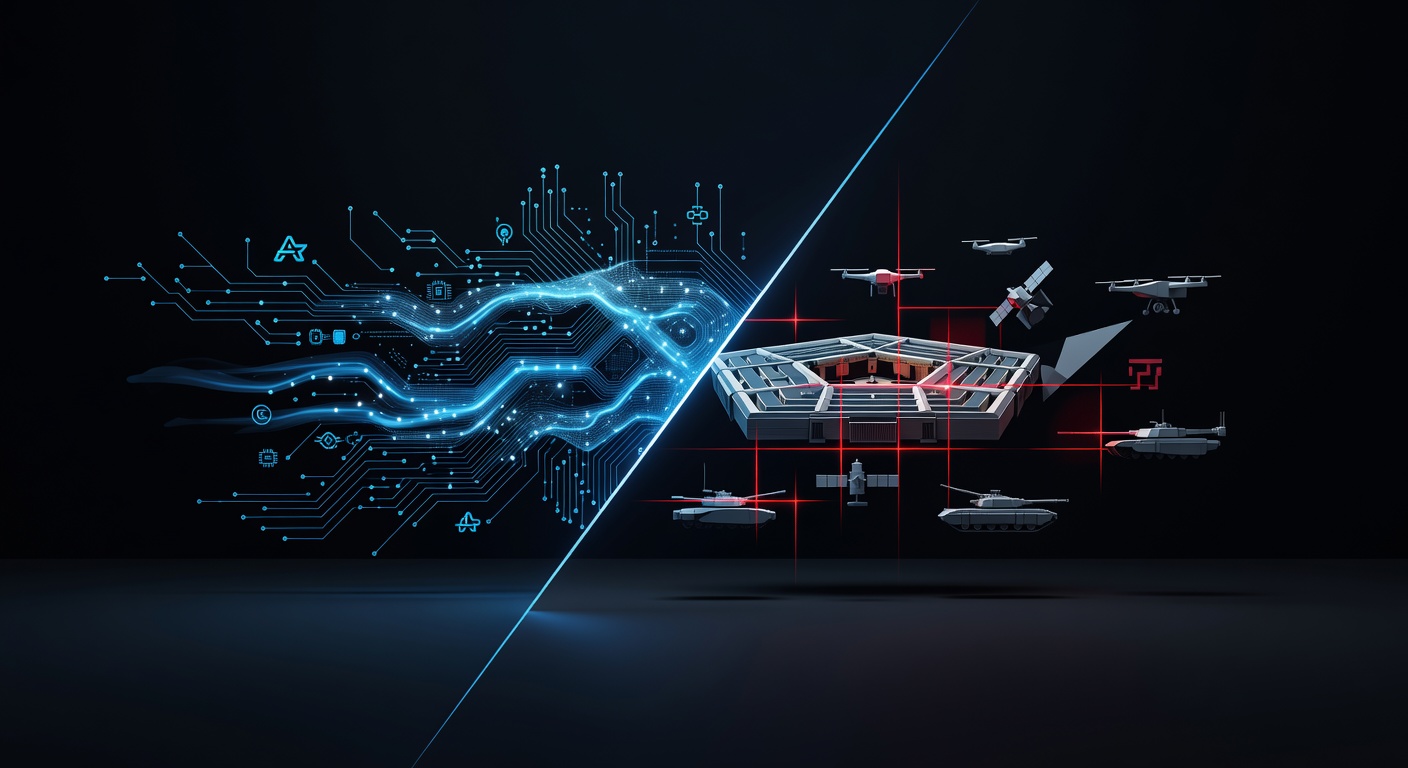

This whole situation has me scratching my head about where we're headed with AI and military applications. On one hand, you've got tech companies trying to position themselves as the responsible AI developers. On the other, you have the government saying, "Thanks, but we'll decide who's trustworthy around here."

Why This Matters More Than You Think

Here's the thing that really gets me: this isn't just about one company getting snubbed. This is about the fundamental question of who gets to control the future of AI in warfare. And honestly? That should make all of us a little uncomfortable.

Anthropic has built its brand around being the "safety-first" AI company. They've been vocal about AI alignment, responsible development, and all those good things that make us sleep better at night. But apparently, that's not enough for the folks at the Pentagon.

The Bigger Picture Is Pretty Wild

What fascinates me is how this reflects the broader tension in the tech world right now. Companies want to appear ethical and responsible to their users and employees, but they also want those lucrative government contracts. It's like trying to be the cool, progressive tech company while also wanting to build systems that could potentially harm people.

The military has specific needs and requirements that don't always align with Silicon Valley's "move fast and break things" mentality. When you're dealing with systems that could affect national security or, you know, actual human lives, trust becomes paramount.

What This Means for the Future

I can't help but wonder if we're witnessing the beginning of a new kind of tech divide. Will we end up with "military AI companies" and "civilian AI companies"? That feels both inevitable and deeply troubling.

The reality is that AI is becoming too important for national security for the government to just trust whatever Silicon Valley serves up. At the same time, the tech companies have the talent and innovation that the military desperately needs.

My Take on This Mess

Look, I get why the Justice Department might be cautious. Trust is earned, not declared. But I also think this highlights a bigger problem: we still don't have clear standards or frameworks for evaluating AI companies when it comes to military applications.

Maybe instead of playing these trust games, we need better oversight, clearer guidelines, and more transparency about what "trustworthy" even means in this context. Because right now, it feels like everyone's making it up as they go along.

What do you think? Should AI companies be eager to work with the military, or is there wisdom in keeping some distance? This debate is just getting started, and frankly, we're all going to be living with the consequences.

Source: https://www.wired.com/story/department-of-defense-responds-to-anthropic-lawsuit