The Problem with Current AI Coding Tests

Here's something that's been bugging me about how we test AI coding abilities: we're asking the wrong questions.

Think about it this way — imagine testing someone's driving skills by only having them parallel park once in perfect conditions. Sure, they might nail it, but what happens when they need to navigate rush hour traffic for months on end?

That's essentially what we've been doing with AI coding assistants. Most tests give the AI a single problem and ask for one solution. The AI writes some code, the code works, and we call it a success. But real software development isn't like that at all.

What Real Coding Actually Looks Like

In the real world, you don't just write code once and walk away. You're constantly:

- Adding new features that interact with existing code

- Fixing bugs that pop up months later

- Refactoring old code to work with new requirements

- Making sure your changes don't break something else entirely

It's messy, iterative, and requires you to think about how your code will evolve over time. A quick hack that works today might create a nightmare six months from now.

Enter SWE-CI: The Long Game Test

Researchers finally realized this gap and created something called SWE-CI — the first benchmark that actually tests whether AI can handle long-term code maintenance.

Instead of one-shot problems, SWE-CI gives AI agents tasks that mirror real software evolution. We're talking about:

- 100 different coding challenges

- Each spanning an average of 233 days of development history

- Requiring 71 consecutive commits on average

- Multiple rounds of analysis and coding iterations

This is fascinating because it's the first time we're testing whether AI can think about code maintainability rather than just code correctness.

Why This Matters More Than You Think

Here's a sobering statistic: maintenance activities eat up 60-80% of a software project's total costs. That's not a typo — most of your development budget goes toward keeping existing code working, not writing new features.

Yet until now, we've been testing AI on the easy 20-40% of the job.

The researchers point out something called Lehman's Laws, which basically state that software naturally degrades over time unless you actively work to prevent it. It's like entropy for code — things naturally get messier and more complex as you add features and fix bugs.

What This Means for AI Development

I think SWE-CI represents a major shift in how we should evaluate coding AI. Instead of asking "Can this AI write working code?" we should be asking "Can this AI write code that humans can actually work with long-term?"

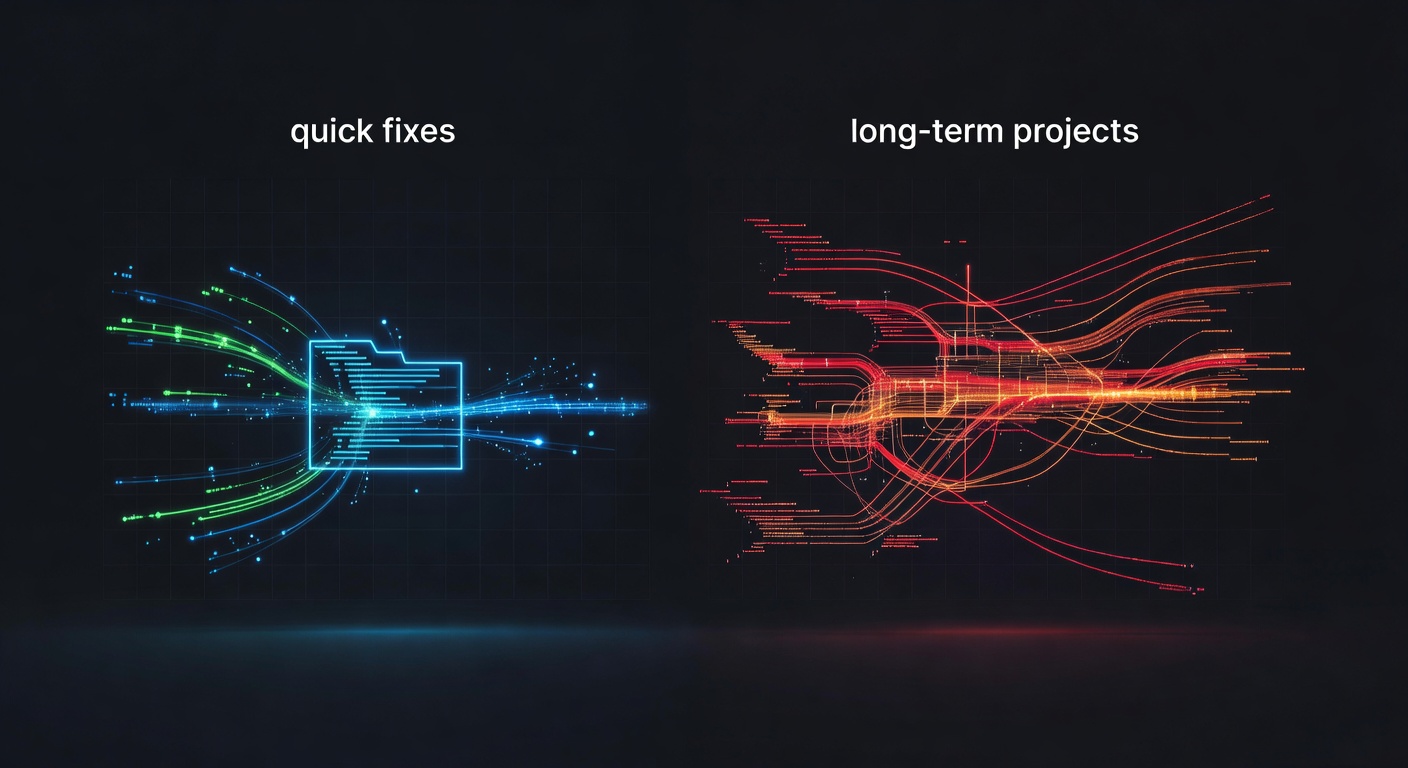

The difference between these two questions is huge. An AI might be able to hardcode a quick fix that passes all the tests, while another AI writes clean, extensible code that's easy to modify later. Under current testing methods, both would get the same score. But in real-world development, the second approach is infinitely more valuable.

The Bigger Picture

This research highlights something I've been thinking about a lot lately: we need AI that thinks like senior developers, not junior ones.

Junior developers often focus on making code work. Senior developers focus on making code that's easy to change, debug, and extend. They think about the developer who will inherit their code six months from now (which might be themselves).

SWE-CI is the first benchmark I've seen that actually tests for this kind of long-term thinking.

Looking Forward

I'm genuinely excited to see how current AI models perform on SWE-CI. My gut feeling is that most will struggle with the long-term maintenance aspects, even if they're great at solving individual coding problems.

But that's not necessarily bad news — it gives us a clear direction for improvement. Instead of just making AI write more code faster, we need to make it write better code that stands the test of time.

What do you think? Have you noticed differences in how AI coding assistants handle quick fixes versus longer-term projects? I'd love to hear your experiences in the comments.

Source: https://arxiv.org/pdf/2603.03823